Uncategorized

Anyone with very little power who says they want the government to run like a business is probably saying they hate bureaucracy. Often for perfectly understandable reasons. Bureaucracies are like prosthetic limbs for society in the sense that they are answers by the government to questions brought about by a large government. They have to be fashioned top-down and imposed on society. Even a bureaucracy handing out goods and services generally feels domineering and arbitrary. Civil servants, in situ, outrank you. They are usually maddeningly rigid and dull.

The end game of monopoly capitalism is to own the organs of government, essentially using a host/parasite model. First by owning the votes of our representatives and secondly by breaking up service bureaucracies (under the guise of correcting wastefulness). The business of privatizing military support, prisons, schools, the post office, etc. Is simply monopoly capitalists guaranteeing themselves riches and power by becoming an unavoidable tax-funded state institution. In succeeding, they also become answerable to neither customers nor voters. Their lockdown on power is ordered and maintained by their subsidiary political machine. Representative government ends.

Anyone with a lot of power who says the same means that he would like some part of our government turned over to him and his cronies. Rich people who say they hate government mean that they hate REPRESENTATIVE government: That they hate any power that doesn’t channel more money to the rich. That’s why rich people are so interested in alternative schools and prisons and military support services. Taking over a piece of the government is a non-competitive gravy train.

It’s sort of like Willy Wonka and the Chocolate Factory, Augustus Gloop will never accept the phrase: “That’s enough for you.”

“In this house, we believe science is real.”

— That Sign

Science is this job:

- Make an observation.

- Ask a relevant question.

- Form a hypothesis or testable explanation.

- Make a prediction based on the hypothesis.

- Test the prediction.

- Have your test and its results peer-reviewed and replicated by other scientists.

- Confirm that those results are statistically meaningful and consistent

- Iterate (repeat): And use the results to make new hypotheses or predictions.

This is the creation process for scientific facts. This process produces the “Proof” of science that we believe in, due to the rigorous method of science. This value only exists in results that have verifiably completed every step in this process.

A fact can only be a scientific fact if it owes its existence to the scientific method. Concerning every other subject on earth, science is objectively not even relevant. What science isn’t is an essential aspect of understanding what it is. Or you might say that concerning any other subject on earth… science doesn’t even exist because Science only exists in this process.

There are absolutely loads of facts that are meaningful, important, and objectively true but have no connection to science at all. There are crazy shit tons of facts that are beautiful, or scary, or somewhat but not completely true. There are another insane amount of facts that are subjective, personal, and anywhere from sort of to mostly untrue objectively.

Then there are ideas, so many millions of them, that generally rest on some awkward constellation of cherry-picked examples from any sort of combination of the proceeding facts, including the scientific ones. These cannot prove or disprove whatever the cherished idea is, they are there as character witnesses called to testify in the case. Their impact on the jury is generally emotional or an appeal to existing assumptions.

The only aspects of science that even call for belief are the method itself, and the unambiguous results (when present) of that applied method. But please remember that not all scientific facts are equal. Unambiguous, repeatable results are high-quality scientific truth, 5-star scientific facts, whereas ambiguous, hard to repeat results are low-quality knockoffs coasting on the reliability of high-quality results.

You should believe that science is real, where and when this process is complete. Including unambiguous and replicable. Any fact NOT born of the scientific method is not a scientific fact. And some facts that are scientific by following the method are nonetheless preliminary, inconclusive, and unreliable.

Everything else is either data, opinion, or speculation. There is nothing wrong with data, opinion, or speculation but none of these are conclusive and none are scientific.

So please remember this, if a scientist speaks of spirituality, mysticism, religion, the meaning of life, etc. They are officially just another shmoe like all the rest of us, with no more credentials than any of the rest of us, and they deserve no more trust or belief than anyone else does.

Scientists speculating beyond hard data they are well informed about are ethically wrong if they are asserting (even tacitly ) any scientific authority. They are abandoning the scientific method, violating the principles that make it meaningful, being dishonest, and committing a famous logical fallacy: The “Appeal to False Authority”.

This is no insult to science. We can properly celebrate the amazing power of science only by remembering its limits as well as its strengths. Remembering these limits renews the simple, solemn pact science has made to us. A promise to adhere to the very terms and conditions that it uses to define itself.

(Memory from 2015)

Last Sunday night I went to bed late, around 1:30. I had to get up at 6 am to get Isaac ready for school and I cursed my stupid restless brain for setting me up to get 4.5 hours of sleep. So then I’m sleeping and something is worrying at me from far off. I’m down in a dream and it’s as if I hear someone calling me. Still really unconscious I’m trying to figure out what’s wrong. I hear my front gates moving, creaking, rattling.

My front yard is enclosed by a tall fence and a set of swinging gates that I routinely lock. It’s like the front room of my house, which happens to be outdoors. I’m used to hearing the gates moving in high wind but it’s obvious to me that the night is absolutely still except for my gates. It slowly comes to me that someone is struggling with the locks, working to get them open. It’s just two hook and eye latches and a slide bolt. Unfortunately, they are set up more to send the message that I don’t want drop-ins than to defeat a concerted attempt to get in. There’s also a bungee stretched across, mainly to keep the gates from moving much in a high wind.

Someone is pushing, pulling, reaching over and under to undo them and I hear them succeeding. Adrenaline. Eyes open. The clock says it’s 4:30 am. My brain is ransacking itself for some story where this is nothing bad. Run to the dark living room and look outside. It’s just what it sounds like: Arms, legs, and torso, pushing, squeezing through the gates, held back only by the bungee now. My brain keeps trying to see in his outline someone I know, someone who is there because they need my help. Nope.

I’m naked. I can’t confront someone like this, so I run to the bedroom to find clothes, and somehow can’t find fucking anything right. Where’s my robe? There! I pull it around me while running back to the living room.

He is through the gates now and he is carefully latching them all back up from the inside. He’s middle height, dressed in black, black hair. Middle eastern? Indian? Latino? He can’t see in because it’s bright out front and dark in here.

Isaac is asleep in his room perhaps 25 feet from where I’m standing, the door slightly ajar. I have a samurai sword on a shelf nearby and I reach for it, feeling very self-conscious like this is a cringey, ridiculous thing to do. I feel like I’m filled with freezing electricity. He’s peering around at the front of the house, I’m coming closer, watching him through the window. He throws something like a cloth or a towel down in front of the front door. He reaches out and puts his hand on the doorknob.

I rap loudly on the window. He startles wide-eyed and focuses on me in the shadows.

GET THE FUCK OUT OF MY YARD!!! I shout. Suddenly thinking of Isaac, please don’t wake up.

His face leans in toward me looking upset and beseeching.

“I just need to come in for a while.” He says, his hand on the doorknob again.

GET THE FUCK OUT OF MY YARD!!! I shout again, brandishing the sword. This is bad but it feels better than the moments of hiding and watching. He looks SO sad. He returns to the gate and begins undoing the locks and squishing himself out under the bungee, still in place.

A moment later, I’m outside redoing the locks and adding things to prevent reentry. As I come back in I realize the thing he threw down in front of my door is my own welcome mat, which had been hanging up to dry after cleaning. I can’t believe it, but Isaac is still asleep. I call 911 and tell them, then somehow eventually get back to sleep. I wake at six, wake up Isaac, and make him breakfast. I don’t say a word about what happened.

This is maybe the oddest moment of my life but it is also far from the most important. It is an indelible, insoluble small mystery shaped like a child’s ridiculous brag. What I love about this story is that it offers no sensible resolution, nor does it actually appear to mean anything in particular. It’s absurd and impossible but obvious to the naked eye and witnessed by dozens on an otherwise ordinary Spring day. This story takes place in Greenville, South Carolina.

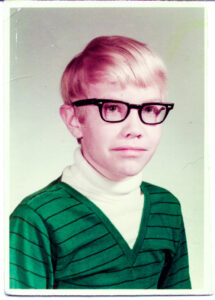

I was at school, in gym class, playing baseball. I was 10 years old, I was small and blond – with big glasses – and notoriously bad at sports. I came up to bat dreading the usual failure and the indifferent contempt of my teammates.

There’s the pitch, I hit the ball solidly. It sailed away from the baseball diamond all the way to the playground where it hit the middle of a HUGE tree as tall as a four-story building, and too big for me to put my arms around. The ball went THOK off the tree as I ran. As I neared first base there was a huge splintering CRACK and the tree collapsed cinematically across the playground which, thank god, was empty of kids.

The game was called on account of amazement.

I was actually carried on the kids’ shoulders into school… into the gobsmacked principal’s office for the coach to inform him. The next day the school had to hire guys with chainsaws to come and cut it up. It loudly took the entire school day. I sat through my classes listening to the chainsaws singing of my glory. As we left that day (and forever after) the carved up parts of my tree were stacked in a huge pile at the far edge of the playground like the bones of Goliath.

It was an enigma that came to visit me in front of everyone like a dazzling celebrity giving me in particular finger guns and a grinning wink. There you go, kid.

Why stress hormones and fight or flight response are part of ADHD “Teaching”

Here’s something that happens to ADHD children a lot: Getting pushed beyond their limits by accident. Here’s how it works and why it’s so bad.

The child says, “I can’t do this.” Adult (teacher or parent) does not believe it, because Adult has seen Child do things that Adult considers more difficult, and Child is too young to properly articulate why the task is difficult.

Adult decides that the problem is something other than true inabilities, like laziness, lack of self-confidence, stubbornness, or lack of motivation.

Adult applies motivation in the form of harsher and harsher scoldings and punishments. The child becomes horribly distressed by these punishments. Finally, the negative emotions produce a wave of adrenaline that temporarily repairs the neurotransmitter deficits caused by ADHD, and the Child manages to do the task, nearly dropping from relief when it’s finally done.

The lesson the Adult takes away is that Child was able to do it all along, the task was quite reasonable, and Child just wasn’t trying hard enough. Now, surely Child has mastered the task and learned the value of simply following instructions the first time.

The lessons Child takes away? Well, it varies, but it might be:

- How to do the task while in a state of extreme panic, which does NOT easily translate into doing the task when calm.

- Using emergency fight-or-flight overdrive to deal with normal daily problems is reasonable and even expected.

- It’s not acceptable to refuse tasks, no matter how difficult or potentially harmful.

- Asking for help does not result in getting useful help.

…………..

Not mine, source:

A lot of us Fucking Love Science and I’m glad about that but overly romantic thinking about Science means we are naive in a world that exploits naivety. This (happily) working scientist explains the rat race aspects of vocational daily science with a special insight into the perverse role of journalists as sloppy, inaccurate promoters of studies in search of public attention. Journalists shake dollars loose with exciting stories that are sometimes even partly true. This cycle points to the danger of scientists getting sloppy too, rushing to publish, making headlines sound sexy, and emphasizing results that are rarely replicable just to keep playing the (now degraded) game. We should think about this stuff. Over a long enough period, that’s a death spiral for what we fucking love about science.*

Scientist –

People think my job is to search for deep truths, understand the meaning of life and how the world and the universe works.

In reality, my main job is to write papers and get grants so my institution can build its reputation and get money. Most research that is published is wrong in some way (except for analytical work on theory), and not because we are being dishonest. It’s because we need to publish a lot and there really isn’t time to zero in on some ultimate truth, we just need to get things right enough to publish. And even if we had all the time in the world, there is the issue of experimental design. For one thing, experimental design is very subtle and difficult and most of the papers I read didn’t really do the right experiment to support their point. You can ask them to do more experiments as a reviewer, but as you can expect, this is not your favorite thing to read when your paper is reviewed because it means more time and money you probably don’t have.

That brings me to the other problem, even if you do have the time, do you have the money? Enough people? The right equipment? An infinite number of just the right test subjects? You see where this is going…

So we write our paper and try to make as big a splash as we can so we can get promoted in our jobs, get tenure, and get more grants. (Also because we want to get the information out there for others to use, research that is unpublished is just a hobby.) The institution wants to peddle this work for more clout, so they write up a press release that over-simplified the research and over-extrapolates the possible importance of the findings. This is sent to journalists who don’t read the actual paper, but rather report based on the press release an even more overly simplified version of the paper, now with wildly speculative implications for humanity, the earth, and/or understanding the universe.

This is how you can publish a paper that shows that mice react kind of funny in a statistically significant way to being exposed to a chemical that is commonly used in ink, and then you read in the New York Times about how using ballpoint pens will kill you.

EDIT: My main point here is that people should be skeptical about science, particularly what they read that is written by journalists – whether it is news articles or actual books. Popular science books were once written by the scientists themselves, now more often they are written by journalists. And there are people who are motivated more by telling a good story than showing what science is really like – full of mistakes and uncertainty, and normal pressures of any career, it is not just the pure search for truth.

But I don’t mean at all to say that being a scientist is terrible (I feel the opposite), I just don’t like the over-simplified way people view science as some pure source of distilled perfect truth. It’s not, but that’s not a bad thing. Understanding life is not simple, nor is understanding how the universe works, the fact that it is so complex is what makes being a scientist fascinating – but what makes writing best-selling book that tells the whole story a bit harder.

u/zazzlekdazzle, Reddit

*- Like so many other things that depend on stockholders for sufficient funding, the important work becomes hollowed out inside and drifts further from its purpose.

Psycho comes from the Greek word psykho, which means mental. The Greek root word path can mean either “feeling” or “disease.” So psychopath is a word meaning “mental illness”. “Sociopath” is not a clinical term and it is a no-no for mental health professionals to use it. However, I am NOT a mental health professional, and the name is rather on point about the issue: Sick towards society, towards people. In the 1830’s this disorder was called “moral insanity.” By 1900 it was changed to “psychopathic personality.” More recently it has been termed “antisocial personality disorder” in the DSM-III and DSM-IV.

DSM-IV Definition: Antisocial personality disorder is characterized by a lack of regard for the moral or legal standards in the local culture. There is a marked inability to get along with others or abide by societal rules.

It’s easy to take the DSM on faith at face value as sufficient authority to settle the issue of who is or isn’t thoroughly, but the needs of the mental health community and others who have to deal with psychopaths don’t line up perfectly. The DSM criteria depend heavily on observed behaviors while law enforcement and criminal justice must often predict behavior based on personality characteristics. Continue reading

To love somebody

who doesn’t love you

is like going to a temple

and worshipping the ass

of a wooden statue

of a hungry devil.

– Lady Kasa

к вашим услугам!

The term “grey goo problem” was coined by nanotechnology pioneer K. Eric Drexler in his 1986 book Engines of Creation. It supposed a self-replicating nanobot going out of control and in a “sorcerer’s apprentice” way, recognizing NO stopping point for self-duplication. The Earth is left as a lifeless desert of “grey goo”, composed of the bodies of the nanobots.

This scenario joined the library of science fiction plots where it continues to appear. In 2004 he stated, “I wish I had never used the term ‘gray goo’.” He was probably conducting a form of due diligence by considering bad outcomes as well as good. As time has gone by, grey goo has been debunked as a concern in various ways (look it up if you are interested I’m not here to explore all that).

But there are other environments and other bots.

The Internet became a primary human environment at lightning speed, filling up with websites that represented more and more real societal institutions. Initially, they were mostly billboards for providing information. Gradually these online presences became interactive and even took over as the “real world”, the business end of everything. The isolation of solitary individuals running errands on the web protected society up to this point. That collapsed when we embraced social media and were reborn as mobs composed of socially isolated individuals. Continue reading