You may not know it by the name but it probably affects you multiple times each day. Filter bubbles are algorithms that track a visitors choices on a website and selectively feed them tailored options when they return. There are a thousand variations of this on the on the web. When you shop at Amazon and search for things it affects the results you’ll see  next time. Every time you watch a YouTube video a hodgepodge of results influenced by that viewing will appear as recommended videos. There are even “cookie relationships” between different websites where what you look at on one could influence what you see THE FIRST TIME you visit a different website. Nobody sees the same Facebook, Reddit or YouTube. This is enough to give many people a creepy “shadowed” feeling while others may shrug and say it’s all anonymous really, so why get into a sweat? The websites would certainly claim that it was only about providing better service by fine tuning your experience to better fit your interests. Of course, better service is always really about better revenue. Plus who are they to say that my experience is better as opposed to overly managed? It probably comes down to two not very nice things:

next time. Every time you watch a YouTube video a hodgepodge of results influenced by that viewing will appear as recommended videos. There are even “cookie relationships” between different websites where what you look at on one could influence what you see THE FIRST TIME you visit a different website. Nobody sees the same Facebook, Reddit or YouTube. This is enough to give many people a creepy “shadowed” feeling while others may shrug and say it’s all anonymous really, so why get into a sweat? The websites would certainly claim that it was only about providing better service by fine tuning your experience to better fit your interests. Of course, better service is always really about better revenue. Plus who are they to say that my experience is better as opposed to overly managed? It probably comes down to two not very nice things:

1. Their anxious sense that they need to control the people visiting the site. I think it unnerves investors and managers to think that visitors are wandering chaotically around out of control, doing what they want in an unmoderated way. I think they feel (not think) that if they are NOT manipulating and attempting to force people through some sort of filters they’ve devised that they’d be failing to do their job.

2. A related issue but not EXACTLY the same: The sense that the product must be refined and distilled for extra strength and intensity so it becomes a more powerful experience. In effect, it’s like adding more sugar, salt and fat to fast food. Is it good for business? Yes. Is it good for the customer? Nope.

One important thing to know is that since Dec. 4, 2009, Google results have been personalized for everyone. Google itself has become the meta filter bubble by telling you more of what it has decided you want to know about and less of what it thinks you don’t want to know about. There is a conflict of interest here that isn’t attracting much notice. Google (motto: “don’t be evil”) has not been high profile about this dramatic change at all and if you want to get unfiltered results you have to do some tricky behind the scenes settings changes. Google clearly profits from the personalization of search results. Google’s advertising profits are enhanced by tailoring them more closely to the personal biases of users but its vaunted informational purity is downgraded. Google should provide a clear: Personal / Objective choice. And think of this, years from now where will your shaped results have drifted? Given enough personalization would a conspiracy theorist for example never encounter a competing version of reality?

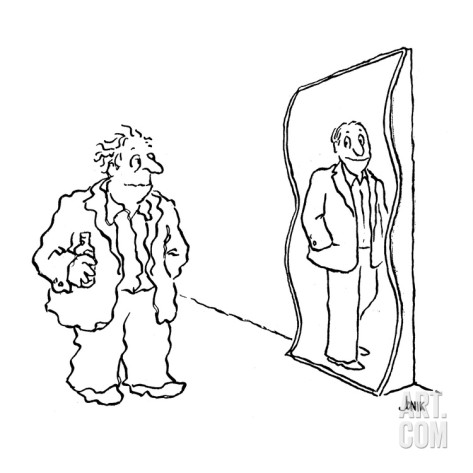

What this whole process reminds me of (especially in this age extreme polarization) is the way people treat the boss, telling him only what he wants to hear and isolating him further and further within a mono-cultural inaccurate echo chamber. What could go wrong, eh? You can imagine all of us gradually glassing over with ever more satisfying drivel.

Eli Pariser is the one who coined the phrase “filter bubble” here he is describing his concerns.